Enable faster training with Amazon SageMaker data parallel library

AWS Machine Learning

DECEMBER 5, 2023

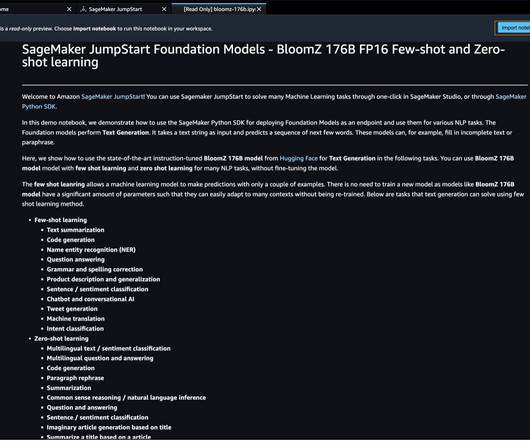

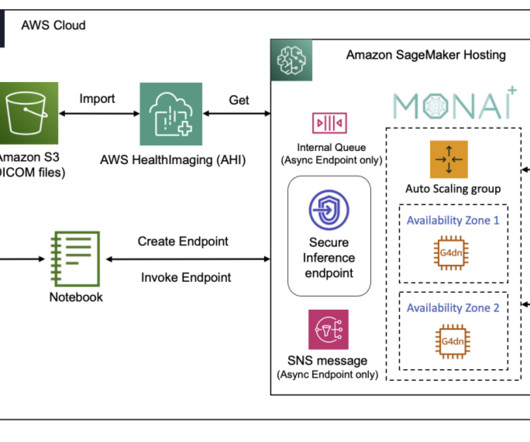

Customers are now training LLMs of unprecedented size ranging from 1 billion to over 175 billion parameters. Solution overview Traditional data parallel training involves replicating an entire model across multiple GPUs, with each model training on different shards of data from the dataset. However, some of today’s large models (e.g.,

Let's personalize your content