AWS Machine Learning Blog

Build a classification pipeline with Amazon Comprehend custom classification (Part I)

“Data locked away in text, audio, social media, and other unstructured sources can be a competitive advantage for firms that figure out how to use it“

Only 18% of organizations in a 2019 survey by Deloitte reported being able to take advantage of unstructured data. The majority of data, between 80% and 90%, is unstructured data. That is a big untapped resource that has the potential to give businesses a competitive edge if they can find out how to use it. It can be difficult to find insights from this data, particularly if efforts are needed to classify, tag, or label it. Amazon Comprehend custom classification can be useful in this situation. Amazon Comprehend is a natural-language processing (NLP) service that uses machine learning to uncover valuable insights and connections in text.

Document categorization or classification has significant benefits across business domains –

- Improved search and retrieval – By categorizing documents into relevant topics or categories, it makes it much easier for users to search and retrieve the documents they need. They can search within specific categories to narrow down results.

- Knowledge management – Categorizing documents in a systematic way helps to organize an organization’s knowledge base. It makes it easier to locate relevant information and see connections between related content.

- Streamlined workflows – Automatic document sorting can help streamline many business processes like processing invoices, customer support, or regulatory compliance. Documents can be automatically routed to the right people or workflows.

- Cost and time savings – Manual document categorization is tedious, time-consuming, and expensive. AI techniques can take over this mundane task and categorize thousands of documents in a short time at a much lower cost.

- Insight generation – Analyzing trends in document categories can provide useful business insights. For example, an increase in customer complaints in a product category could signify some issues that need to be addressed.

- Governance and policy enforcement – Setting up document categorization rules helps to ensure that documents are classified correctly according to an organization’s policies and governance standards. This allows for better monitoring and auditing.

- Personalized experiences – In contexts like website content, document categorization allows for tailored content to be shown to users based on their interests and preferences as determined from their browsing behavior. This can increase user engagement.

The complexity of developing a bespoke classification machine learning model varies depending on a variety of aspects such as data quality, algorithm, scalability, and domain knowledge, to mention a few. It’s essential to start with a clear problem definition, clean and relevant data, and gradually work through the different stages of model development. However, businesses can create their own unique machine learning models using Amazon Comprehend custom classification to automatically classify text documents into categories or tags, to meet business specific requirements and map to business technology and document categories. As human tagging or categorization is no longer necessary, this can save businesses a lot of time, money, and labor. We have made this process simple by automating the whole training pipeline.

In first part of this multi-series blog post, you will learn how to create a scalable training pipeline and prepare training data for Comprehend Custom Classification models. We will introduce a custom classifier training pipeline that can be deployed in your AWS account with few clicks. We are using the BBC news dataset, and will be training a classifier to identify the class (e.g. politics, sports) that a document belongs to. The pipeline will enable your organization to rapidly respond to changes and train new models without having to start from scratch each time. You may scale up and train multiple models based on your demand easily.

Prerequisites

- An active AWS account (Click here to create a new AWS account)

- Access to Amazon Comprehend, Amazon S3, Amazon Lambda, Amazon Step Function, Amazon SNS, and Amazon CloudFormation

- Training data (semi-structure or text) prepared in following section

- Basic knowledge about Python and Machine Learning in general

Prepare training data

This solution can take input as either text format (ex. CSV) or semi-structured format (ex. PDF).

Text input

Amazon Comprehend custom classification supports two modes: multi-class and multi-label.

In multi-class mode, each document can have one and only one class assigned to it. The training data should be prepared as two-column CSV file with each line of the file containing a single class and the text of a document that demonstrates the class.

Example for BBC news dataset:

In multi-label mode, each document has at least one class assigned to it, but can have more. Training data should be as a two-column CSV file, which each line of the file containing one or more classes and the text of the training document. More than one class should be indicated by using a delimiter between each class.

No header should be included in the CSV file for either of the training mode.

Semi-structured input

Starting in 2023, Amazon Comprehend now supports training models using semi-structured documents. The training data for semi-structure input is comprised of a set of labeled documents, which can be pre-identified documents from a document repository that you already have access to. The following is an example of an annotations file CSV data required for training (Sample Data):

The annotations CSV file contains three columns: The first column contains the label for the document, the second column is the document name (i.e., file name), and the last column is the page number of the document that you want to include in the training dataset. In most cases, if the annotations CSV file is located at the same folder with all other document, then you just need to specify the document name in the second column. However, if the CSV file is located in a different location, then you’d need to specify the path to location in the second column, such as path/to/prefix/document1.pdf.

For details, how to prepare your training data, please refer to here.

Solution overview

- Amazon Comprehend training pipeline starts when training data (.csv file for text input and annotation .csv file for semi-structure input) is uploaded to a dedicated Amazon Simple Storage Service (Amazon S3) bucket.

- An AWS Lambda function is invoked by Amazon S3 trigger such that every time an object is uploaded to specified Amazon S3 location, the AWS Lambda function retrieves the source bucket name and the key name of the uploaded object and pass it to training step function workflow.

- In training step function, after receiving the training data bucket name and object key name as input parameters, a custom model training workflow kicks-off as a series of lambdas functions as described:

StartComprehendTraining: This AWS Lambda function defines aComprehendClassifierobject depending on the type of input files (i.e., text or semi-structured) and then kicks-off an Amazon Comprehend custom classification training task by calling create_document_classifier Application Programming Interfact (API), which returns a training Job Amazon Resource Names (ARN) . Subsequently, this function checks the status of the training job by invoking describe_document_classifier API. Finally, it returns a training Job ARN and job status, as output to the next stage of training workflow.GetTrainingJobStatus: This AWS Lambda checks the job status of training job in every 15 minutes, by calling describe_document_classifier API, until training job status changes to Complete or Failed.GenerateMultiClassorGenerateMultiLabel: If you select yes for performance report when launching the stack, one of these two AWS Lambdas will run analysis according to your Amazon Comprehend model outputs, which generates per class performance analysis and save it to Amazon S3.GenerateMultiClass: This AWS Lambda will be called if your input is MultiClass and you select yes for performance report.GenerateMultiLabel: This AWS Lambda will be called if your input is MultiLabel and you select yes for performance report.

- Once the training is done successfully, the solution generates following outputs:

- Custom Classification Model: A trained model ARN will be available in your account for future inference work.

- Confusion Matrix [Optional]: A confusion matrix (

confusion_matrix.json) will be available in user defined output Amazon S3 path, depending on the user selection. - Amazon Simple Notification Service notification [Optional]: A notification email will be sent about training job status to the subscribers, depending on the initial user selection.

Walkthrough

Launching the solution

To deploy your pipeline, complete the following steps:

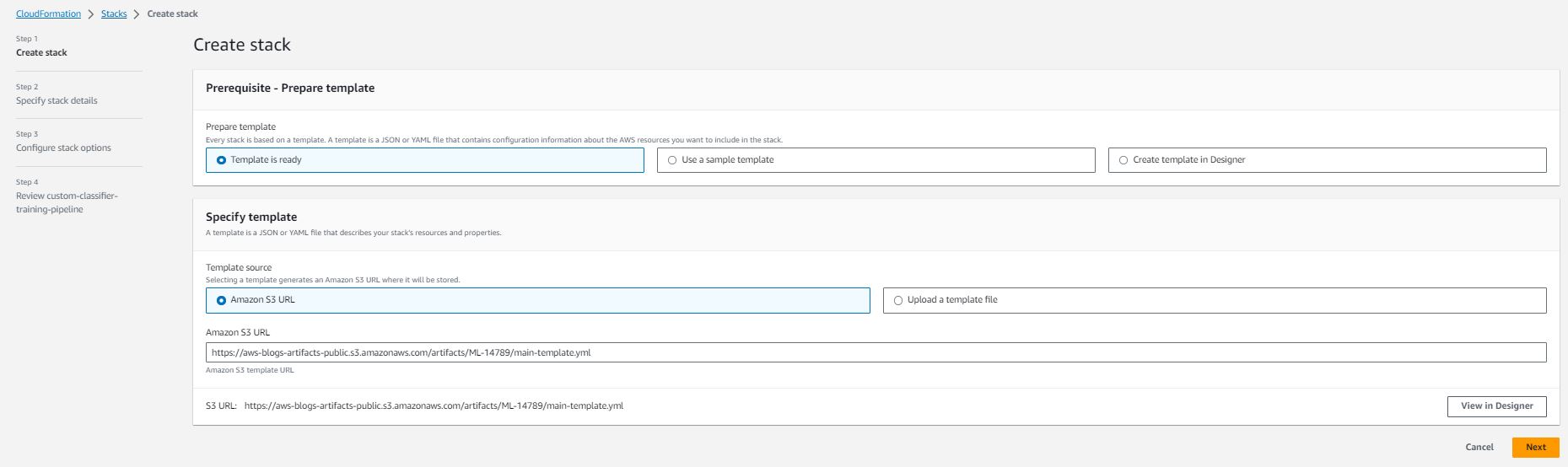

- Choose Launch Stack button:

- Choose Next

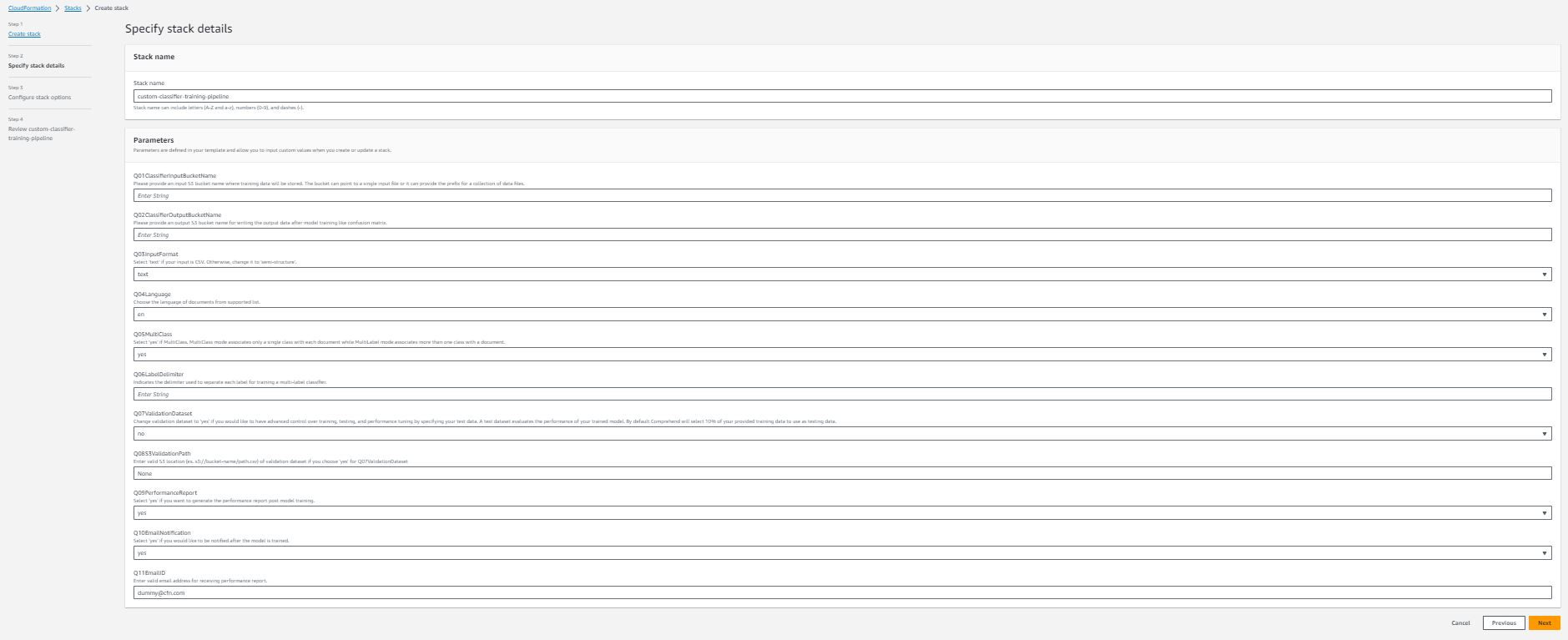

- Specify the pipeline details with the options fitting your use case:

Information for each stack detail:

- Stack name (Required) – the name you specified for this AWS CloudFormation stack. The name must be unique in the Region in which you’re creating it.

- Q01ClassifierInputBucketName (Required) – The Amazon S3 bucket name to store your input data. It should be a globally unique name and AWS CloudFormation stack helps you create the bucket while it’s being launched.

- Q02ClassifierOutputBucketName (Required) – The Amazon S3 bucket name to store outputs from Amazon Comprehend and the pipeline. It should also be a globally unique name.

- Q03InputFormat – A dropdown selection, you can choose text (if your training data is csv files) or semi-structure (if your training data are semi-structure [e.g., PDF files]) based on your data input format.

- Q04Language – A dropdown selection, choosing the language of documents from supported list. Please note, currently only English is supported if your input format is semi-structure.

- Q05MultiClass – A dropdown selection, select yes if your input is MultiClass mode. Otherwise, select no.

- Q06LabelDelimiter – Only required if your Q05MultiClass answer is no. This delimiter is used in your training data to separate each class.

- Q07ValidationDataset – A dropdown selection, change the answer to yes if you want to test the performance of trained classifier with your own test data.

- Q08S3ValidationPath – Only required if your Q07ValidationDataset answer is yes.

- Q09PerformanceReport – A dropdown selection, select yes if you want to generate the class-level performance report post model training. The report will be saved in you specified output bucket in Q02ClassifierOutputBucketName.

- Q10EmailNotification – A dropdown selection. Select yes if you want to receive notification after model is trained.

- Q11EmailID – Enter valid email address for receiving performance report notification. Please note, you have to confirm subscription from your email after AWS CloudFormation stack is launched, before you could receive notification when training is completed.

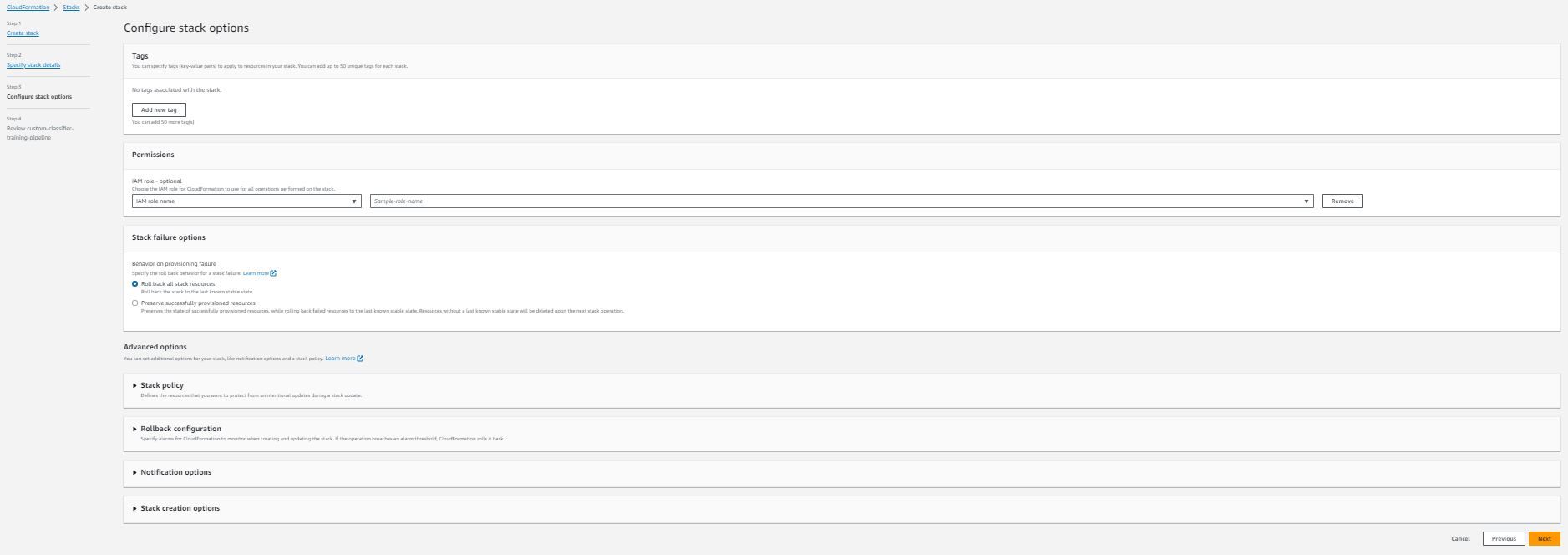

- In the Amazon Configure stack options section, add optional tags, permissions, and other advanced settings.

- Choose Next

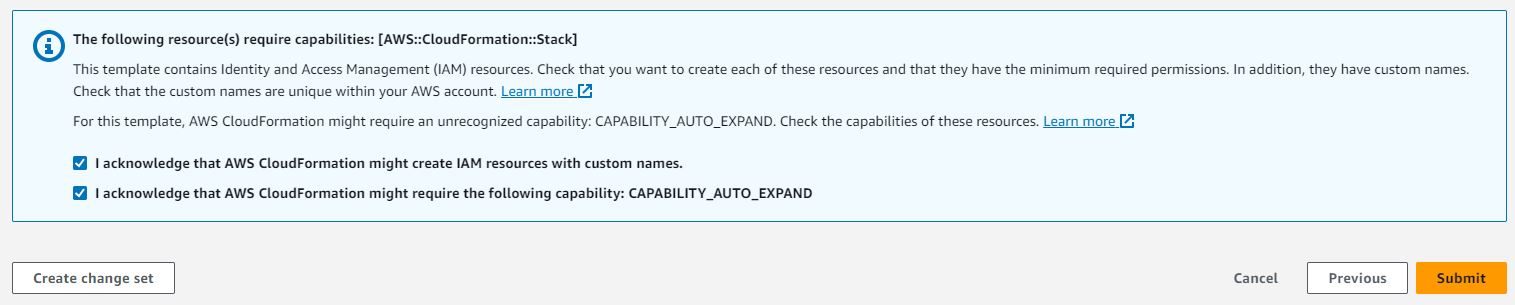

- Review the stack details and select I acknowledge that AWS CloudFormation might create AWS IAM resources.

- Choose Submit. This initiates pipeline deployment in your AWS account.

- After the stack is deployed successfully, then you can start using the pipeline. Create a

/training-datafolder under your specified Amazon S3 location for input. Note: Amazon S3 automatically applies server-side encryption (SSE-S3) for each new object unless you specify a different encryption option. Please refer Data protection in Amazon S3 for more details on data protection and encryption in Amazon S3.

- Upload your training data to the folder. (If the training data are semi-structure, then upload all the PDF files before uploading .csv format label information).

You’re done! You’ve successfully deployed your pipeline and you can check the pipeline status in deployed step function. (You will have a trained model in your Amazon Comprehend custom classification panel).

If you choose the model and its version inside Amazon Comprehend Console, then you can now see more details about the model you just trained. It includes the Mode you select, which corresponds to the option Q05MultiClass, the number of labels, and the number of trained and test documents inside your training data. You could also check the overall performance below; however, if you want to check detailed performance for each class, then please refer to the Performance Report generated by the deployed pipeline.

Service quotas

Your AWS account has default quotas for Amazon Comprehend and AmazonTextract, if inputs are in semi-structure format. To view service quotas, please refer here for Amazon Comprehend and here for AmazonTextract.

Clean up

To avoid incurring ongoing charges, delete the resources you created as part of this solution when you’re done.

- On the Amazon S3 console, manually delete the contents inside buckets you created for input and output data.

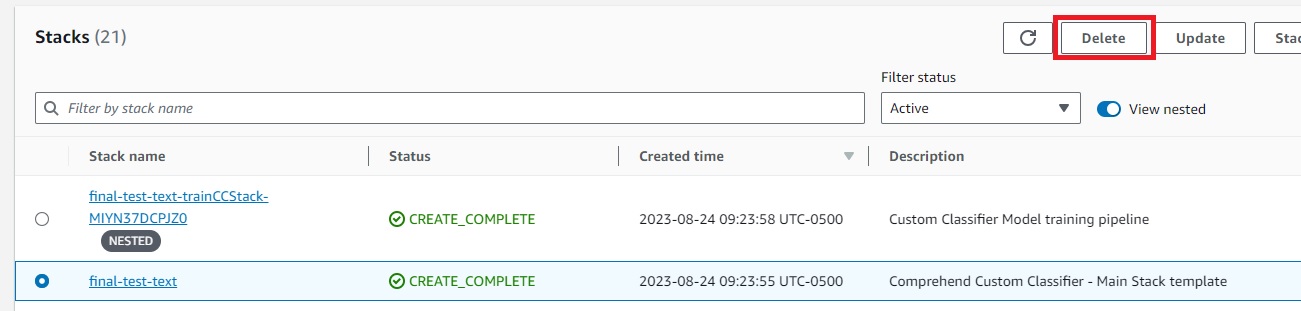

- On the AWS CloudFormation console, choose Stacks in the navigation pane.

- Select the main stack and choose Delete.

This automatically deletes the deployed stack.

- Your trained Amazon Comprehend custom classification model will remain in your account. If you don’t need it anymore, in Amazon Comprehend console, delete the created model.

Conclusion

In this post, we showed you the concept of a scalable training pipeline for Amazon Comprehend custom classification models and providing an automated solution to efficiently training new models. The AWS CloudFormation template provided makes it possible for you to create your own text classification models effortlessly, catering to demand scales. The solution adopts the recent announced Euclid feature and accepts inputs in text or semi-structured format.

Now, we encourage you, our readers, to test these tools. You can find more details about training data preparation and understand the custom classifier metrics. Try it out and see firsthand how it can streamline your model training process and enhance efficiency. Please share your feedback to us!

About the Authors

Sandeep Singh is a Senior Data Scientist with AWS Professional Services. He is passionate about helping customers innovate and achieve their business objectives by developing state-of-the-art AI/ML powered solutions. He is currently focused on Generative AI, LLMs, prompt engineering, and scaling Machine Learning across enterprises. He brings recent AI advancements to create value for customers.

Sandeep Singh is a Senior Data Scientist with AWS Professional Services. He is passionate about helping customers innovate and achieve their business objectives by developing state-of-the-art AI/ML powered solutions. He is currently focused on Generative AI, LLMs, prompt engineering, and scaling Machine Learning across enterprises. He brings recent AI advancements to create value for customers.

Yanyan Zhang is a Senior Data Scientist in the Energy Delivery team with AWS Professional Services. She is passionate about helping customers solve real problems with AI/ML knowledge. Recently, her focus has been on exploring the potential of Generative AI and LLM. Outside of work, she loves traveling, working out and exploring new things.

Yanyan Zhang is a Senior Data Scientist in the Energy Delivery team with AWS Professional Services. She is passionate about helping customers solve real problems with AI/ML knowledge. Recently, her focus has been on exploring the potential of Generative AI and LLM. Outside of work, she loves traveling, working out and exploring new things.

Wrick Talukdar is a Senior Architect with the Amazon Comprehend Service team. He works with AWS customers to help them adopt machine learning on a large scale. Outside of work, he enjoys reading and photography.

Wrick Talukdar is a Senior Architect with the Amazon Comprehend Service team. He works with AWS customers to help them adopt machine learning on a large scale. Outside of work, he enjoys reading and photography.